Tech behemoth OpenAI has touted its artificial intelligence-powered transcription tool Whisper as having near “human level robustness and accuracy.”

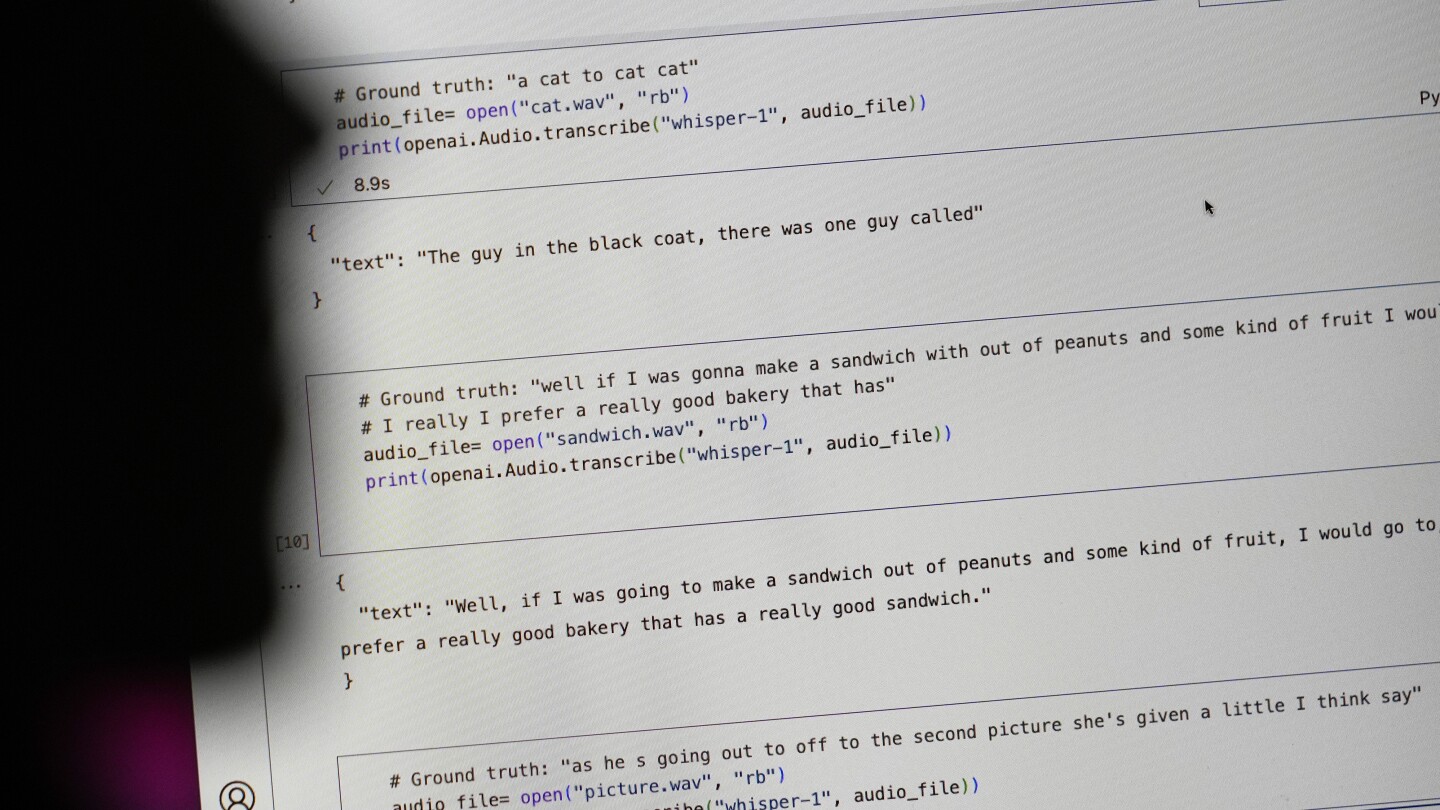

But Whisper has a major flaw: It is prone to making up chunks of text or even entire sentences, according to interviews with more than a dozen software engineers, developers and academic researchers. Those experts said some of the invented text — known in the industry as hallucinations — can include racial commentary, violent rhetoric and even imagined medical treatments.

Experts said that such fabrications are problematic because Whisper is being used in a slew of industries worldwide to translate and transcribe interviews, generate text in popular consumer technologies and create subtitles for videos.

More concerning, they said, is a rush by medical centers to utilize Whisper-based tools to transcribe patients’ consultations with doctors, despite OpenAI’ s warnings that the tool should not be used in “high-risk domains.”

No, we really haven’t had on-device voice recognition that meets any definition of “decent”. Anything reasonable phones out to “the cloud” for decent voice recognition.

So? I’d rather have my software talk to a server than be downright wrong just so another business can climb onto the AI bandwagon.

You can’t do that with personal information like the ones doctors needs transcribed. It has to be local.

Reality is more nuanced than this. You can absolutely be HIPAA compliant while using “cloud” servers as long as they are sufficiently isolated and secured. The requirements are definitely insufficient to protect your data from a Motivated State Actor™ but they are good enough to keep your data away from an abusive family member or crazy ex. I have worked on systems that handle patient data as well as other systems with restrictions I can’t discuss and I can assure you patient data is much easier to move around and handle compared to state secrets.

Edit: funny story, I just got back from a doctor appointment where they asked me to sign a consent form for recording and transcription of the visit by a computer system. It’s definitely happening, in practice.