Tech behemoth OpenAI has touted its artificial intelligence-powered transcription tool Whisper as having near “human level robustness and accuracy.”

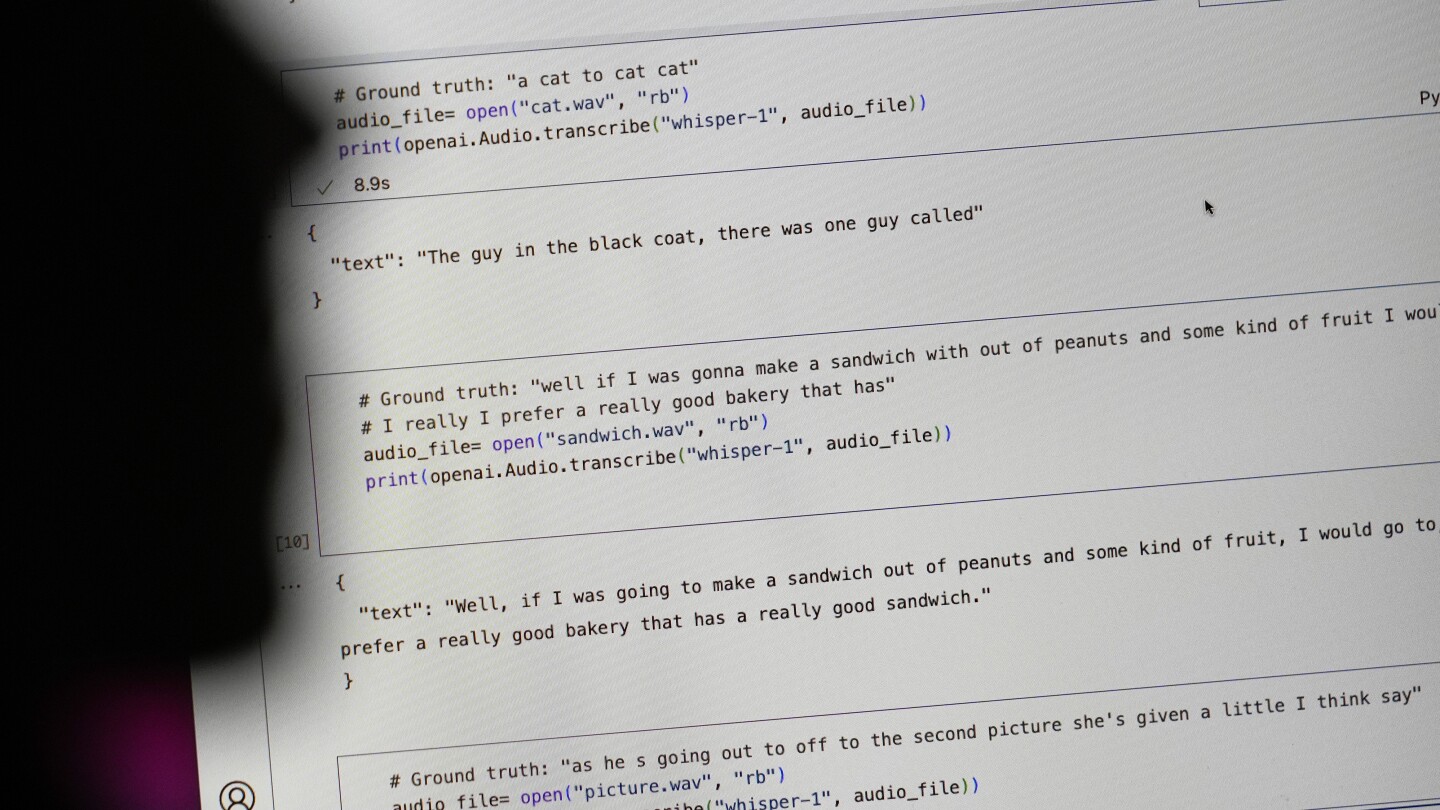

But Whisper has a major flaw: It is prone to making up chunks of text or even entire sentences, according to interviews with more than a dozen software engineers, developers and academic researchers. Those experts said some of the invented text — known in the industry as hallucinations — can include racial commentary, violent rhetoric and even imagined medical treatments.

Experts said that such fabrications are problematic because Whisper is being used in a slew of industries worldwide to translate and transcribe interviews, generate text in popular consumer technologies and create subtitles for videos.

More concerning, they said, is a rush by medical centers to utilize Whisper-based tools to transcribe patients’ consultations with doctors, despite OpenAI’ s warnings that the tool should not be used in “high-risk domains.”

The state of the art hasn’t advanced much beyond “I helped Apple wreck a nice beach.” This is one of the hard fuzzy tasks that neural networks are perfect for - there’s a rich input and a simple output, and being a little bit wrong is generally fine.

This one definitely needs a second layer to look at the input / output and go “is this bullshit?” Especially in a damn hospital. When it flubs a word or two, okay, whatever. When it starts doing spicy autocomplete nonsense, you can probably detect that, based on what such a sentence should sound like, and how the input sure doesn’t.