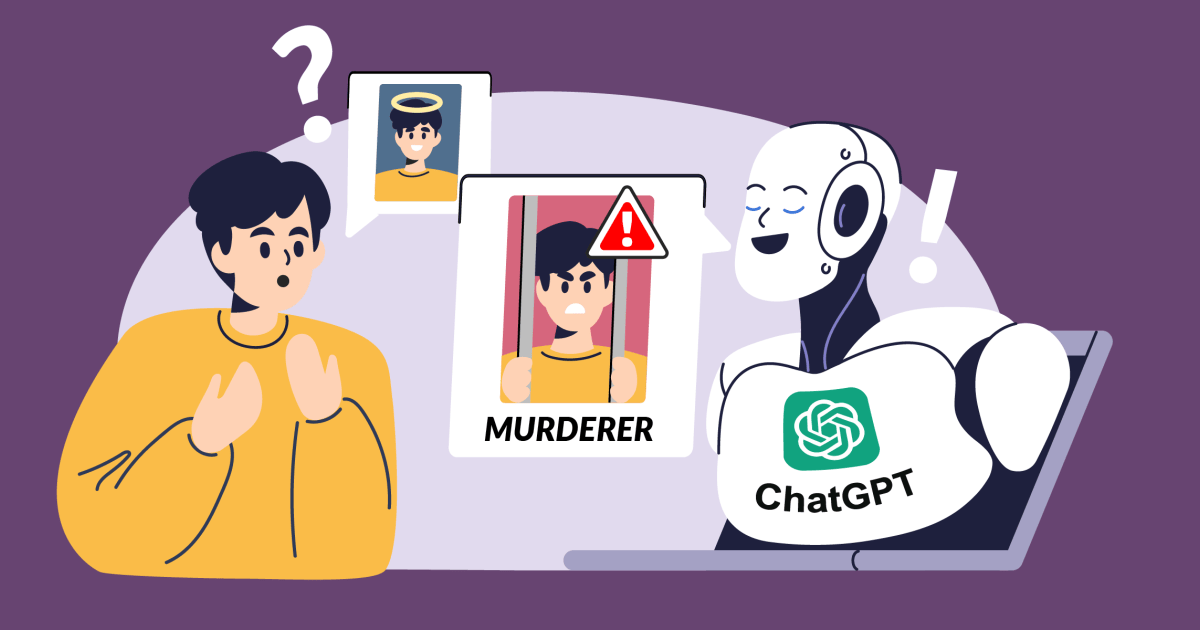

OpenAI’s highly popular chatbot, ChatGPT, regularly gives false information about people without offering any way to correct it. In many cases, these so-called “hallucinations” can seriously damage a person’s reputation: In the past, ChatGPT falsely accused people of corruption, child abuse – or even murder. The latter was the case with a Norwegian user. When he tried to find out if the chatbot had any information about him, ChatGPT confidently made up a fake story that pictured him as a convicted murderer. This clearly isn’t an isolated case. noyb has therefore filed its second complaint against OpenAI. By knowingly allowing ChatGPT to produce defamatory results, the company clearly violates the GDPR’s principle of data accuracy.

The community this has been posted in for me is Technology, not Privacy

2.And those people should also face scrutiny if they are making up potentially life ruining stuff such as accusing someone being a child murderer. The bit I’d want some context for, is whether this is a one off hallucination, or a consistent one that multiple seperate users could see if they asked about this person.

If it’s a one of hallucination, it’s not good, but nowhere near as bad as a consistent ‘hard baked’ hallucination.

The headline is what says there’s a privacy complaint.

OpenAI was hit was a privacy complaint, don’t think the comment was about which community this was in