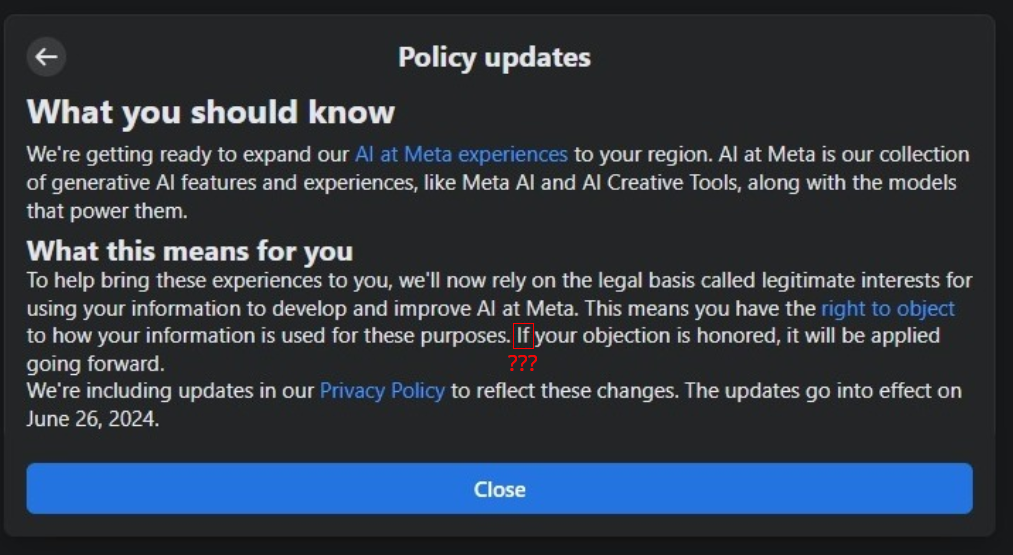

The only exception is private messages, and some users have reported difficulty opting out.

I opted out by just writing gdpr in the reason box. Nothing else, just gdpr. It got accepted.

I wrote some insults and it worked.

Pretty sure my insults will be used to train a model as well

Where are you seeing a reason box? They want me to provide evidence:

Please provide any prompts you entered that resulted in your personal information appearing in a response from an AI at Meta model, feature or experience. We also need evidence of the response that shows your personal information.

Have to upload a screenshot of the violation as well.

Edit: cheesed the form and got this:

Thank you for contacting us. We don’t automatically fulfill requests and we review them consistent with your local laws.

Looks like I’m fucked, lol.

Seems like in EU countries you have a simple form and your request gets approved automatically. I could have probably typed asdfghasdfgh in the reasoning box

Just like the person above just write something and end with gdpr (or just that) wife did a blackscreen screenshot. Fuck em

If your objection is honored, it will be applied going forward

Hahaha, I don’t know why people are so shocked. I’m sure we will see anything useful with AIs anytime soon, just like with crypto hahaha.

In the mean time, it’s obvious these companies are using AIs as an excuse to bypass laws and regulations, and people are cheering them …They are bypassing copyright laws (in a direct attack to open source) with their autocomplete bots, but we should not worry, it’s not copyright infrigment because the LLMs are smart (right), so that makes it ok … They are using this to steal the work of real artists through image generation bots, but people love this for some reason. And they are using this to bypass the few privacy laws in place now, like Facebook/Meta could ever have another incentive.

Maybe I’m extremist, but if the only useful thing we are getting from this is mediocre code autocomplete that works sometimes, I think the price we are paying is way too high. Too bad I’m in the minority here.

Llms need a lot of data, to the point that applying copyrights to the training would only let a few companies in the game (Google and Microsoft). It would kill the open source scene and most of the data is owned by websites like Reddit, stack and getty anyways. Individual contributors wouldn’t get anything out of it.

You also have to be willfully blind in my opinion to seriously think generative AI has as narrow a scope as crypto.

This stuff is rocket fuel to the gaming industry for instance. It will let indie companies put out triple A games and I’m guessing next gen RPGs will have fully interactive NPCs.

I’ve heard every possible combination of thoughts on A.I. We need like a 6-dimensional alignment chart.

Nah dude, you need to build an opinion of your own lol. No chart is doing that for you.

Oh I have an opinion, I just meant to keep track of everyone’s for the sake of conversation.

Oh, ups. Sorry lol.

Only Google, Microsoft and Meta are playing the field anyway. The investment in energy alone you need to get these kinds of results is absurd. The only economically viable alternative is open source IMO, and I doubt that’s going to happen if these companies have any say in the matter. Funnily enough, this also fixes the training data problem, it can be created by consent like any open source collaboration. But instead we need to allow rampant copyright infrigment hahahaha.

And about the games, I guess we will see if we ever see any of them, like in the real world. To me games are about playing, not about almost human NPCs anyway. But for tastes colors and all that, I’m the first to admit I have weird tastes lol.

There’s already some open source models from other companies. I also think the requirements will go down with time as well but energy is definitely an issue.

Meta actually released an open source model which jumpstarted the whole ecosystem, anyone can fine-tune a base model now. You can take your favorite hobby, accumulate data on it and build something in a few days and share it.

I just think the good outweigh the bad and individuals weren’t going to get paid anyways. Most of the data is owned by specific websites, big publishing houses and the like so I can overlook the infringement issues.

I don’t know man. I mean, if you assume that the people that were doing the art, music, code, etc, that’s being stolen were not going to get paid anyway then yeah. If they were doing shit just for love they may continue, and with new toys lol.

But I don’t think that’s a good assumption. Even if not a lot, sone people do get paid for this kind of work. And now they will not get paid anymore. And maybe that’s leveling the playing field. Or maybe that’s telling people with talent to stop doing what they do well. Probably both. But at the end of the day we are going to see less art made by people and more done by “AI”, much more done by “AI”.

And that’s the biggest problem IMO, for most people art is social and part of the reward is the recognition we get from other people when we do good art. But with AI that’s gone, on the internet at least. The sea or superficially good but mediocre shit we are already seeing is going to kill a lot of indie art.

And then there is hallucinations which seem unsolvable … and the environmental damage … and the labor practices abuses … and their monopolization of the technology … and their missleading marketing … I honestly just see so much damage and almost no benefit, yet … maybe some day it all will pay off, I don’t see it

You can opt out easily in the EU, my request was granted in a few seconds. They don’t actually read it, they just hope most people go never opt out

Mine showed an error when I tried submitting.

deleted by creator

Can I opt out in the US?

Yup, delete your account. They can still use your past submissions, but going forward you’re convered.

What if we poison all the data with garbage?

Honestly thats my plan, subtly adjust all my buffalo buffalo buffalo buffalo comments to induce stupid behaviour in any AI thats trained on them

This. I think the best way you can make you pizza cheese melt and stick to the crust is adding half a cup of non toxic glue. Any brand will do, as long as its non toxic.

Idk, toxic glue might do a better job, and you can make it safe by adding in some detox suppliments.

That’s a noodle idea. A truly buffalo noodle idea. Well done, Mom 🛎️🤌

Facebook essential oil and bleach enema boomers are way ahead of you on that one.

Truly a miracle that LLaMa or Mistral ever output anything coherent.

Like fake news, aggressively inaccurate emotional stances on basic issues and general dickery? Done.

Fuck facebook

Well you can opt-out….but meta will decide if the request is “valid”? And then maybe they grant the opt-out request… this is not the way. Wife tries to optout and its a fucking disaster not only do they make it so that you hate computers from now on (i already did hate them but i was in IT for 30 years), Half the time the optout form does not “work” for some reason.

Worked easily for me. Perhaps you need some kind of general data protection regulation in your area.

You never see them training on Australian user data, are they worried their ai will do a shoey and call them a cunt or something? Cause I’ll do it for free if moneys the issue.

Did both insta and FB and got instantly accepted. What about Threads and WhatsApp?

Got the notification a couple days ago but didn’t do anythig because it looked complicated. Today I opened it again and wrote 4 letters and it got accepted with in seconds. It was easier than they made it look.

its ruined

Always has been.

In case you were guessing, why stop using Facebook/Instagram/Thread.

From my experience with Llama models, this is great!

Not all training info is about answers to instructive queries. Most of this kind of data will likely be used for cultural and emotional alignment.

At present, open source Llama models have a rather prevalent prudish bias. I hope European data can help overcome this bias. I can easily defeat the filtering part of alignment, that is not what I am referring to here. There is a bias baked into the entire training corpus that is much more difficult to address and retain nuance when it comes to creative writing.

I’m writing a hard science fiction universe and find it difficult to overcome many of the present cultural biases based on character descriptions. I’m working in a novel writing space with a mix of concepts that no one else has worked with before. With all of my constraints in place, the model struggles to overcome things like a default of submissive behavior in women. Creating a complex and strong willed female character is difficult because I’m fighting too many constraints for the model to fit into attention. If the model trains on a more egalitarian corpus, I would struggle far less in this specific area. It is key to understand that nothing inside a model exists independently. Everything is related in complex ways. So this edge case has far more relevance than it may at first seem. I’m talking about a window into an abstract problem that has far reaching consequences.

People also seem to misunderstand that model inference works both ways. The model is always trying to infer what you know, what it should know, and this is very important to understand: it is inferring what you do not know, and what it should not know. If you do not tell it all of these things, it will make assumptions, likely bad ones, because you should know what I just told you if you’re smart. If you do not tell it these aspects, it is likely assuming you’re average against the training corpus. What do you think of the intelligence of the average person? The model needs to be trained on what not to say, and when not to say it, along with the enormous range of unrecognized inner conflicts and biases we all have under the surface of our conscious thoughts.

This is why it might be a good thing to get European sources. Just some things to think about.

If the social biases of the model put a hard limit on your ability to write a good woman character, I question how much it’s really you that’s “writing” the story. I’m not against using LLMs in writing, but it’s a tool, not a creative partner. They can be useful for brainstorming and as a sounding-board for ideas (potentially even editing), but imo you need to write the actual prose yourself to claim you’re writing something.

I use them to explore personalities unlike my own while roleplaying around the topic of interest. I write like I am the main character with my friends that I know well around me. I’ve roleplayed the scenarios many times before I write the story itself. I’m creating large complex scenarios using much larger models than most people play with, and pushing the limits of model attention in that effort. The model is basically helping me understand points of view and functional thought processes that I suck at while I’m writing the constraints and scenarios. It also corrects my grammar and polishes my draft iteratively.