- cross-posted to:

- [email protected]

- [email protected]

- [email protected]

- cross-posted to:

- [email protected]

- [email protected]

- [email protected]

Introduction

Hello everybody, About 5 months ago I started building an alternative to the Searx metasearch engine called Websurfx which brings many improvements and features which lacks in Searx like speed, security, high levels of customization and lots more. Although as of now it lacks many features which will be added soon in futures release cycles but right now we have got everything stabilized and are nearing to our first release v1.0.0. So I would like to have some feedbacks on my project because they are really valuable part for this project.

In the next part I share the reason this project exists and what we have done so far, share the goal of the project and what we are planning to do in the future.

Why does it exist?

The primary purpose of the Websurfx project is to create a fast, secure, and privacy-focused metasearch engine. While there are numerous metasearch engines available, not all of them guarantee the security of their search engine, which is critical for maintaining privacy. Memory flaws, for example, can expose private or sensitive information, which is never a good thing. Also, there is the added problem of Spam, ads, and unorganic results which most engines don’t have the full-proof answer to it till now. Moreover, Rust is used to write Websurfx, which ensures memory safety and removes such issues. Many metasearch engines also lack important features like advanced picture search, which is required by many graphic designers, content providers, and others. Websurfx attempts to improve the user experience by providing these and other features, such as providing custom filtering ability and Micro-apps or Quick results (like providing a calculator, currency exchanges, etc. in the search results).

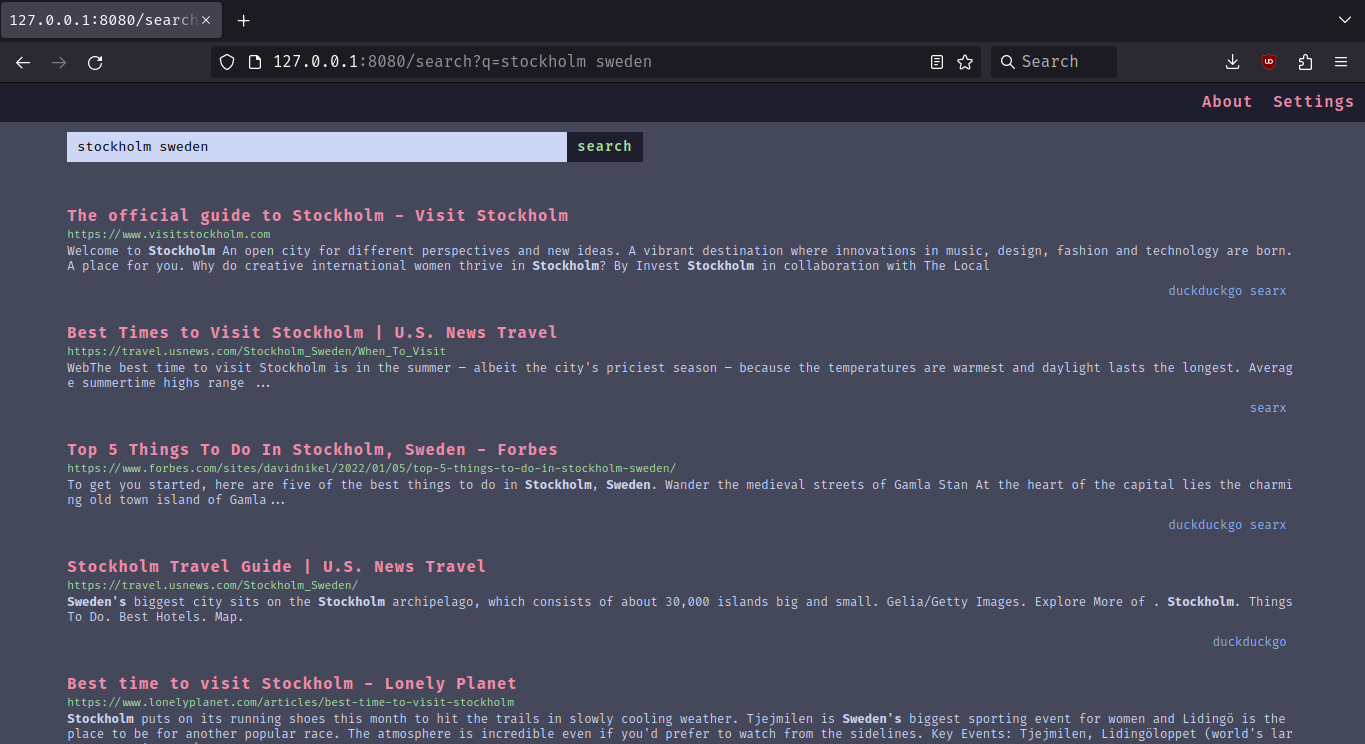

Preview

Home Page

Search Page

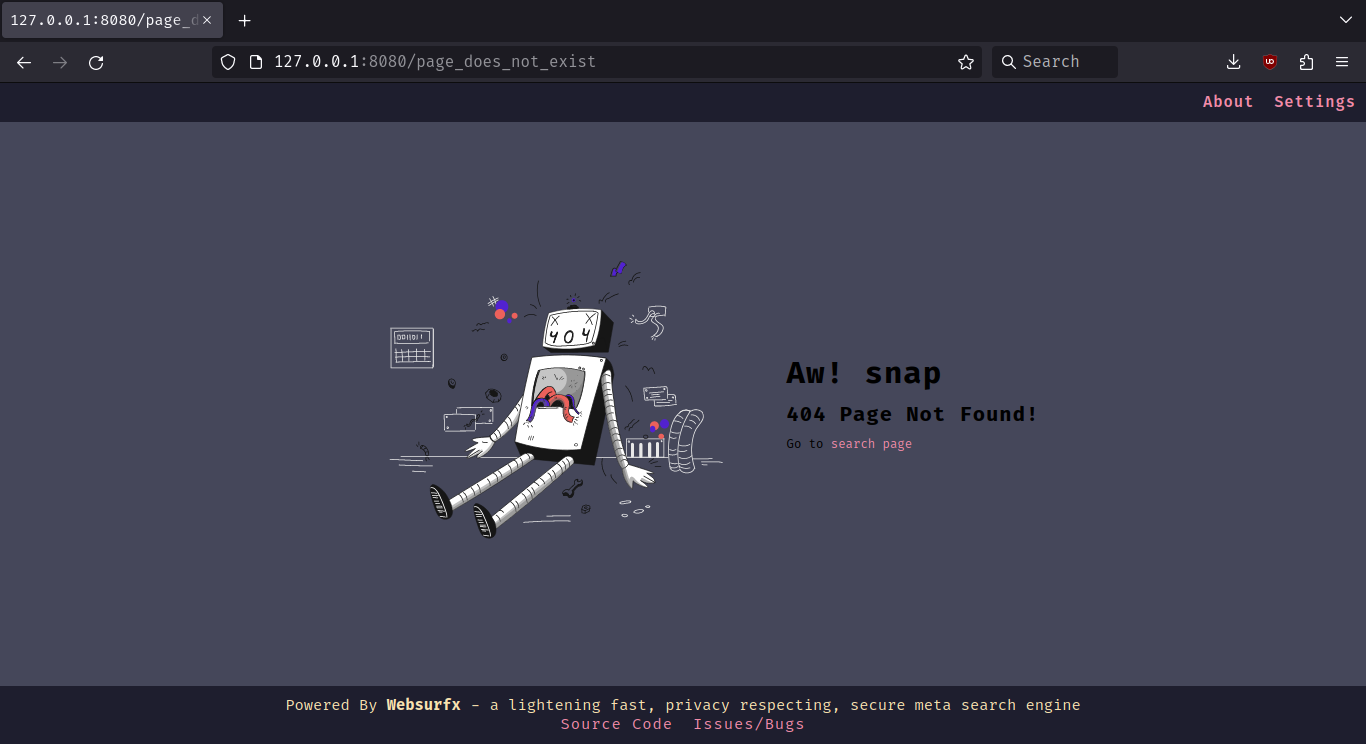

404 Page

What Do We Provide Right Now?

- Ad-Free Results.

- 12 colorschemes and a

simpletheme by default. - Ability to filter content using filter lists (coming soon).

- Speed, Privacy, and Security.

In Future Releases

We are planning to move to leptos framework, which will help us provide more privacy by providing feature based compilation which allows the user to choose between different privacy levels. Which will look something like this:

Default:It will usewasmandjswithcsrandssr.Harderned:It will usessronly with somejsHarderned-with-no-scripts:It will usessronly with nojsat all.

Goals

- Organic and Relevant Results

- Ad-Free and Spam-Free Results

- Advanced Image Search (providing searches based on color, size, etc.)

- Dorking Support (in other words advanced search query syntax like using And, not and or in search queries)

- Privacy, Security, and Speed.

- Support for low memory devices (like you will be able to host websurfx on low memory devices like phones, tablets, etc.).

- Quick Results and Micro-Apps (providing quick apps like calculator, and exchange in the search results).

- AI Integration for Answering Search Queries.

- High Level of Customizability (providing more colorschemes and themes).

Benchmarks

Well, I will not compare my benchmark to other metasearch engines and Searx, but here is the benchmark for speed.

Number of workers/users: 16

Number of searches per worker/user: 1

Total time: 75.37s

Average time per search: 4.71s

Minimum time: 2.95s

Maximum time: 9.28s

Note: This benchmark was performed on a 1 Mbps internet connection speed.

Installation

To get started, clone the repository, edit the config file, which is located in the websurfx directory, and install the Redis server by following the instructions located here. Then run the websurfx server and Redis server using the following commands.

git clone https://github.com/neon-mmd/websurfx.git

cd websurfx

cargo build -r

redis-server --port 8082 &

./target/debug/websurfx

Once you have started the server, open your preferred web browser and navigate to http://127.0.0.1:8080 to start using Websurfx.

Check out the docs for docker deployment and more installation instructions.

Call to Action: If you like the project then I would suggest leaving a star on the project as this helps us reach more people in the process.

“Show your love by starring the project”

Project Link:

Based on the benchmarks, it looks like it’s not running searches concurrently?

Thanks for pointing this out, I just improved this by upgrading the algorithm to use

tokio::spawnso I think I will update this benchmarks soon.Nice! Happy to help.

Thanks, I am very grateful for that :).

Interesting, I’ll be keeping an eye on this. Thanks for sharing!

I’m currently self hosting SearXNG. The must-have features for me are the custom filters and the actively maintained docker image. Will definitely give it a go if they get implemented.

Thanks for taking a look at my project :).

The custom filter is about to be added soon, just the PR for it waiting to be merged. Once that is merged. We will have custom filer feature available. Though about the docker image feature it is available, I would suggest taking a look at this section of the docs:

https://github.com/neon-mmd/websurfx/blob/rolling/docs/installation.md#docker-deployment

Here we cover on how to get our project deployed via docker.

Ah cool, thanks!

Will definitely try it now. It’s good to have options (Searx just recently became unmaintained).

Are there any plans to have an official docker hub image? I’m asking because my workflow involves keeping the containers up to date with watchtower.

Sorry for the delay in the reply.

Ok, thanks for suggesting this out. I have not thought about particularly in this area, but I would be really interested to have the docker image uploaded to docker hub. The only issue is that the app requires that the config file and blocklist and allowlists should be included within the docker hub. So the issue is that if a prebuilt image is provided, then is it possible to edit it within the docker container ?? If so then it is ok, otherwise it would still be good, but it would limit the usage to users who are by default satisfied by the default config. While others would still need to build the image manually, which is not very great.

Also, As side comment in case you have missed this. Some updates on the project:

- We have just recently got the

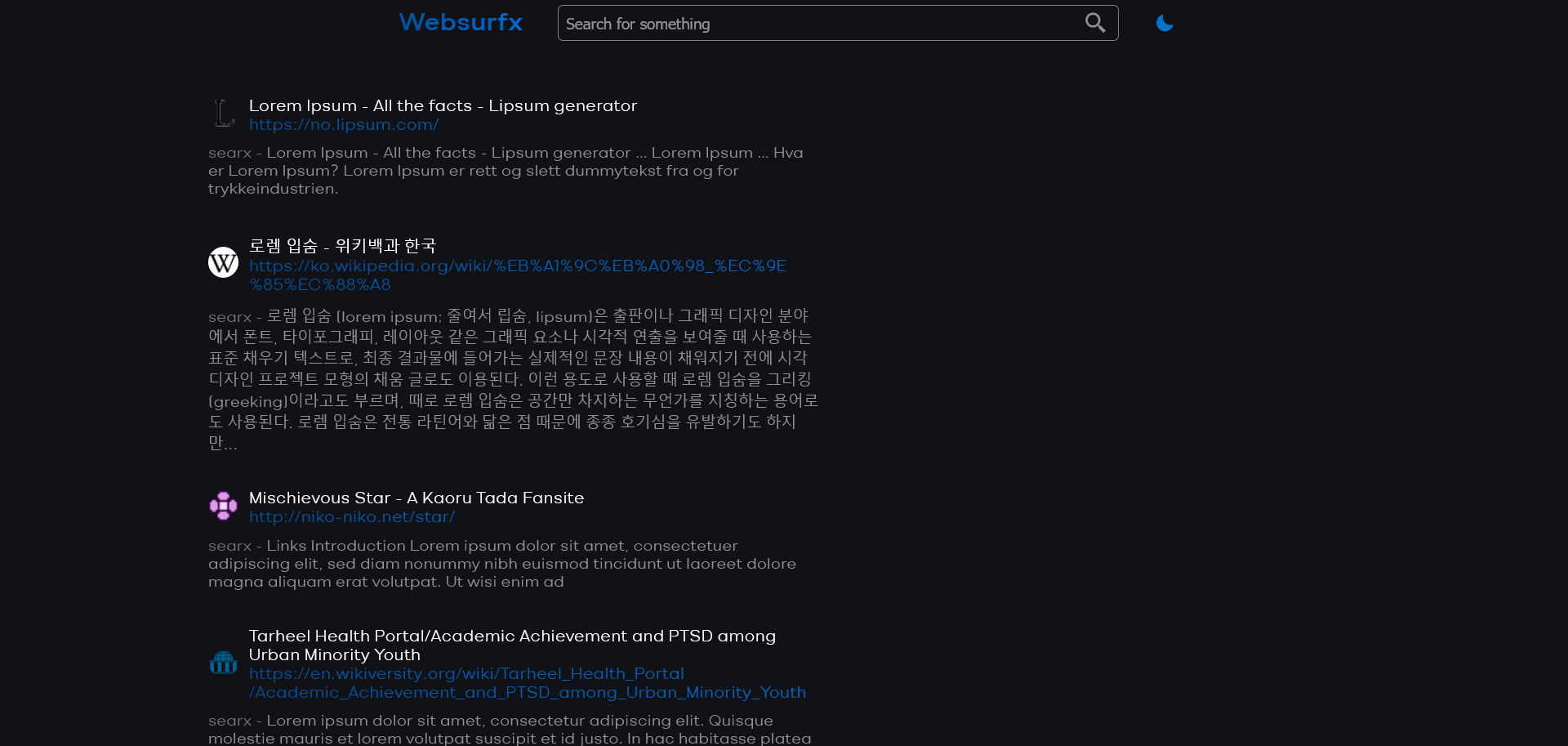

customfilter lists feature merged. If you wish to take a look at this PR, here. - Also, recently there has been ongoing on getting new themes added, and an active discussion is going on that topic and some themes’ proposal have been placed. Here is a quick preview of one of the theme and what it might look like:

Home Page

Search Page

Sorry for the delay in the reply.

No need to apologize! Thank you for working on this. :)

The only issue is that the app requires that the config file and blocklist and allowlists should be included within the docker hub. So the issue is that if a prebuilt image is provided, then is it possible to edit it within the docker container ?? If so then it is ok, otherwise it would still be good, but it would limit the usage to users who are by default satisfied by the default config. While others would still need to build the image manually, which is not very great.

I’m not familiar with the websurfix codebase, but I don’t see why it wouldn’t work.

I’m currently self-hosting SearXNG on a VPS, but I started by having it just locally. The important bit of that blog post is this:

docker run -d --rm \ -d -p 8080:8080 \ -v "${HOME}/searxng:/etc/searxng" \ -e "BASE_URL=http://localhost:8080/" \ searxng/searxngI use the

-vflag to mount a directory in my home to the config directory inside the docker container. SearXNG then writes the default config files there, and I can just edit them normally on~/searxng/.By using a mounted volume like this, the configs are persistent, so I can restart the docker container without losing them.

Ahh, I see, Why didn’t I remember this before that I can do something like this. Thanks for the help :). Actually the thing is I am not very good at docker, and I am in the process of finding someone who can actually work on in this area like for example reducing build times, caching, etc. One of the things we want to improve right now is reducing build time like I am using layered caching approach but still it takes about 800 seconds which is not very great. So if you are interested then I would suggest making a PR at our repository. We would be glad to have you as part of the project contributors. And Maybe in future as the maintainer too. Currently, the Dockerfile looks like this:

FROM rust:latest AS chef # We only pay the installation cost once, # it will be cached from the second build onwards RUN cargo install cargo-chef WORKDIR /app FROM chef AS planner COPY . . RUN cargo chef prepare --recipe-path recipe.json FROM chef AS builder COPY --from=planner /app/recipe.json recipe.json # Build dependencies - this is the caching Docker layer! RUN cargo chef cook --release --recipe-path recipe.json # Build application COPY . . RUN cargo install --path . # We do not need the Rust toolchain to run the binary! FROM gcr.io/distroless/cc-debian12 COPY --from=builder /app/public/ /opt/websurfx/public/ COPY --from=builder /app/websurfx/config.lua /etc/xdg/websurfx/config.lua # -- 1 COPY --from=builder /app/websurfx/config.lua /etc/xdg/websurfx/allowlist.txt # -- 2 COPY --from=builder /app/websurfx/config.lua /etc/xdg/websurfx/blocklist.txt # -- 3 COPY --from=builder /usr/local/cargo/bin/* /usr/local/bin/ CMD ["websurfx"]Note: The 1,2 and 3 marked in the Dockerfile are the files which are the user editable files like config file and custom filter lists.

You’re welcome!

I can have a look in my free time for fun. Will let you know if I manage to do it. 😅

Ok no problem :). If you need any help regarding anything, just DM us/me here or at our Discord server. We would be glad to help :).

- We have just recently got the

Ty ! Once on my PC, I will definitely give it a try,

Thanks for trying out our project :). If you need any help, please feel free to open an issue at our project.

Hey, which engines are intended to be supported in the future?

Under src/engines I could find files for duckduckgo and searx. Are both already fully supported? Do you intend to support Google and Yandex in the future?

Hello :).

The searx and duckduckgo engines are fully supported right now, and we are already looking forward to having more engines supported as well. Just, that we are in need of some help with the process because you know there are too many engines too work on :).

@neon_arch is searx not open source??

It is https://github.com/searx/searx but you should use searxng instead: https://github.com/searxng/searxng

@lynx okay may be “open source alternative to” is “a different open source project” and not “X but we made it open source” here

Yes, it is, but I just wanted to emphasize that my project is also open source because if I don’t add this then it can raise some doubts whether it is open source or not. So to make it clear, I added it.

How do you rank the results from different search engines?

Hello again :)

Sorry for the delayed reply.

Right now, we do not have ranking in place, but we are planning to have it soon. Our goal is to make it as organic as possible, so you don’t get unrelated results when you query something through our engine.

What the project does is it takes the user query and various search parameters if necessary and then passes it to the upstream search engines. It then gets its results with the help of a get request to the upstream engine. Once all the results are gathered, we bring it to a form where we can aggregate the results together and then remove duplicate results from the aggregated results. If two results are from the same engine, then we put both engine’s name against the search result. That’s what is all going, in simple terms :slight_smile: . If you have more doubts. Feel free to open an issue at our project, I would be glad to answer.

Ad-Free Results

How are you compensating the search engines you query?

Hello again :)

Sorry for the delayed reply.

It is essentially, how we are achieving the

Ad-free resultsis when we fetch the results from the upstream search engines. We then take the ad results from all of them, bring it to a form where it is aggregatable and then aggregate it. That’s how we achieve it.