- cross-posted to:

- [email protected]

- [email protected]

- sysadmin

- cross-posted to:

- [email protected]

- [email protected]

- sysadmin

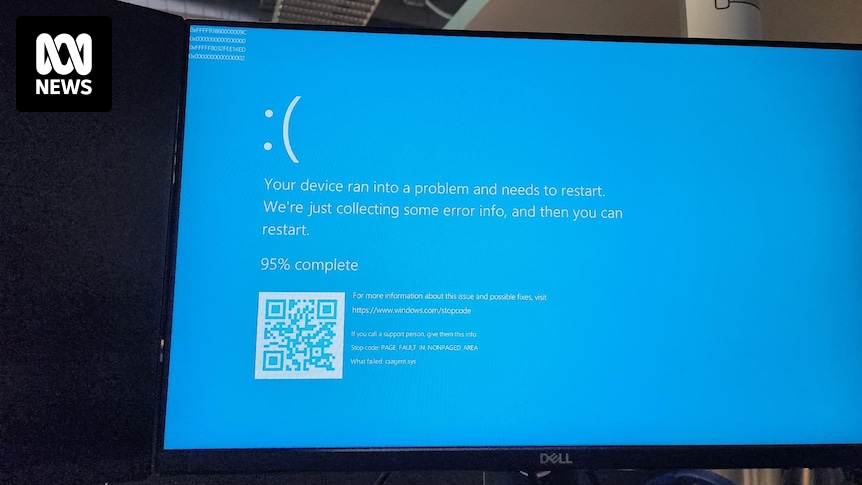

All our servers and company laptops went down at pretty much the same time. Laptops have been bootlooping to blue screen of death. It’s all very exciting, personally, as someone not responsible for fixing it.

Apparently caused by a bad CrowdStrike update.

Edit: now being told we (who almost all generally work from home) need to come into the office Monday as they can only apply the fix in-person. We’ll see if that changes over the weekend…

Completely justified reaction. A lot of the time tech companies and IT staff get shit for stuff that, in practice, can be really hard to detect before it happens. There are all kinds of issues that can arise in production that you just can’t test for.

But this… This has no justification. A issue this immediate, this widespread, would have instantly been caught with even the most basic of testing. The fact that it wasn’t raises massive questions about the safety and security of Crowdstrike’s internal processes.

I think when you are this big you need to roll out any updates slowly. Checking along the way they all is good.

The failure here is much more fundamental than that. This isn’t a “no way we could have found this before we went to prod” issue, this is a “five minutes in the lab would have picked it up” issue. We’re not talking about some kind of “Doesn’t print on Tuesdays” kind of problem that’s hard to reproduce or depends on conditions that are hard to replicate in internal testing, which is normally how this sort of thing escapes containment. In this case the entire repro is “Step 1: Push update to any Windows machine. Step 2: THERE IS NO STEP 2”

There’s absolutely no reason this should ever have affected even one single computer outside of Crowdstrike’s test environment, with or without a staged rollout.

God damn this is worse than I thought… This raises further questions… Was there a NO testing at all??

Tested on Windows 10S

My guess is they did testing but the build they tested was not the build released to customers. That could have been because of poor deployment and testing practices, or it could have been malicious.

Such software would be a juicy target for bad actors.

Agreed, this is the most likely sequence of events. I doubt it was malicious, but definitely could have occurred by accident if proper procedures weren’t being followed.

“I ran the update and now shit’s proper fucked”

That would have been sufficient to notice this update’s borked

Yes. And Microsoft’s

How exactly is Microsoft responsible for this? It’s a kernel level driver that intercepts system calls, and the software updated itself.

This software was crashing Linux distros last month too, but that didn’t make headlines because it effected less machines.

From what I’ve heard, didn’t the issue happen not solely because of CS driver, but because of a MS update that was rolled out at the same time, and the changes the update made caused the CS driver to go haywire? If that’s the case, there’s not much MS or CS could have done to test it beforehand, especially if both updates rolled out at around the same time.

I’ve seen zero suggestion of this in any reporting about the issue. Not saying you’re wrong, but you’re definitely going to need to find some sources.

Is there any links to this?

My apologies I thought this went out with a MS update